We’ve all heard about the carbon footprint of flying, our diets, our homes, and the cars we drive. But there’s a new sustainability challenge that most of us are engaging with every day, often without a second thought: Artificial Intelligence.

Whether you’re asking Copilot to write a summary of a work email, using ChatGPT to review your latest research paper, or relying on tools like Outlook to autocomplete your sentences, you are using AI, and quite a bit of energy in the process.

But here’s the challenging part: unlike our homes, cars, and diets, where we have significant control over our choices, AI’s environmental impact is mostly locked behind closed doors. When you send a prompt, you don’t see the massive racks of servers, the powerful GPUs, and the millions of gallons of water used to cool them in data centres across the world.

So, if all of this is happening in high-tech data centres owned by tech giants, what can a person or an organisation like our university do about it? Are we just passive consumers of a new, carbon-intensive technology? The good news is no, we don’t have to be passive consumers.

We absolutely can make choices to reduce our environmental footprint when using AI and we are actively doing so. The real power lies in two simple choices: where and how you use AI.

The Hidden Costs of a Single Prompt

Every time you use an AI model (like ChatGPT or Claude), you’re triggering a chain reaction of environmental costs. These costs can be broken into three categories:

1. The Sunk Cost: Model Training

Before you could type your first prompt, AI engineers had to train the model. This process involves feeding the model massive amounts of data (often the entire public internet) and running it on thousands of energy-intensive GPUs (Graphics Processing Units) for weeks or even months.

- Energy Use: Training large language models like GPT-4 can consume as much energy as powering 120 UK homes for an entire year. OpenAI’s GPT-3 training was estimated to emit 552 metric tonnes of carbon dioxide.

- Your Control: Unfortunately, these models have already been trained by the time they’re in your hands as an end user. Here, the responsibility lies with AI companies to reduce their training footprint by using data centres powered by renewable energy, which some (like Microsoft and Google) are increasingly adopting.

2. The Physical Cost: The Hardware

AI doesn’t exist in a magic cloud; it runs on physical computers. These computers, mostly built by companies like Nvidia, require an energy-intensive manufacturing process that involves mining rare earth metals and producing GPUs. Additionally, data centres that house these machines must be built, maintained, and cooled.

- Water Use: Cooling data centres can consume millions of gallons of water annually. For example, a single Google data centre in Oregon used 274 million gallons of water in 2021.

- Your Control: Again, individual users can’t directly influence hardware manufacturing, but we can advocate for transparency and sustainability from AI providers. Look for companies that prioritise energy-efficient hardware, carbon offsets, and renewable energy sources.

3. The Operational Cost: Inference Tasks

Finally, we get to what we can control. Every time you hit “Enter” on a prompt, you trigger what’s called an inference task, the process of the AI “thinking” and generating a response. This requires computational power, which consumes electricity and generates heat. That heat then requires cooling, which involves even more energy and water.

- Energy Use: Each inference task consumes a small amount of energy. With billions of prompts being sent daily, the collective energy use is enormous.

- Your Control: The good news is that you can directly reduce this cost by making smarter choices about how you use AI.

Our choices on how we use AI do matter

Since we can’t control training or hardware manufacturing, our focus should be on the inference stage, where we have the most influence. Here’s how we can make a difference:

The Where: Use Sustainable Data Centres

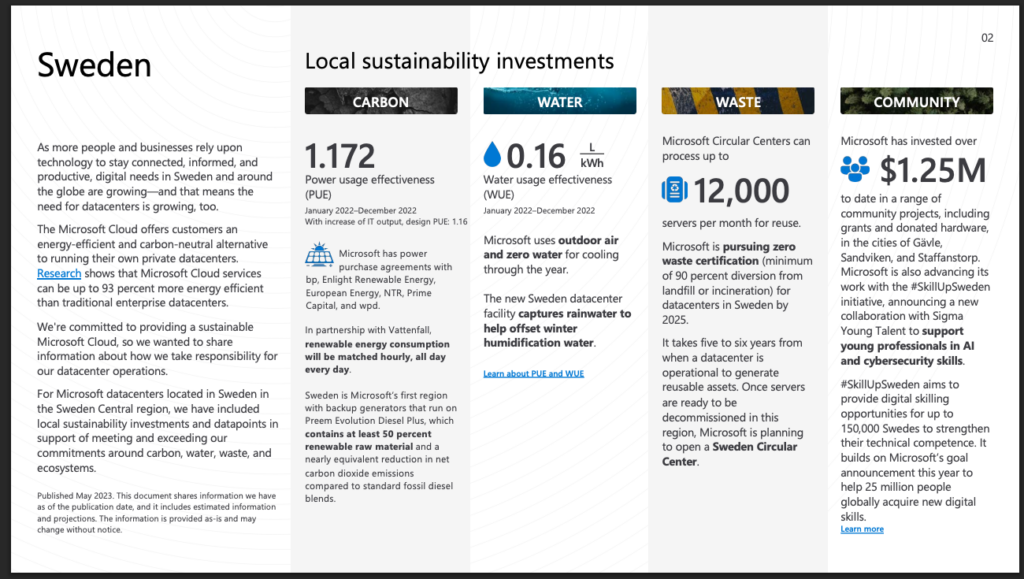

Not all data centres are created equal. Some are powered by coal and gas, while others are intentionally located in regions with abundant renewable energy. For example, Microsoft’s AI data centres in Sweden run on 100% renewable energy, significantly reducing the carbon footprint of AI tasks.

At the University of Birmingham, we are prioritising sustainable innovation, where we can. Our new university AI platform, launching later in 2026, is designed to utilise Microsoft Azure’s Sweden Central region to provide 100% renewable energy for AI inference. While many specialised models on our platform do require hosting across a set of distributed global data centres, we are giving our staff and students the power to choose more carbon-aware LLMs (large language models) that are deployed specifically using 100% renewable energy sources.

Addressing concerns over the massive energy demands of AI, Microsoft is pairing its infrastructure growth with aggressive sustainability goals. The tech giant has already secured nearly 1 GW of renewable energy in Sweden, bolstered by a strategic partnership with state-owned utility Vattenfall to ensure its data centers remain as green as they are powerful.

- Impact: By choosing a renewable-powered data centre, the carbon footprint of AI tasks can be reduced by up to 98%. For example, Microsoft reports that its Sweden data centre emits only 0.02 metric tonnes of CO₂ per megawatt-hour compared to 0.85 metric tonnes for a coal-powered data centre.

- Note: The University platforms can provide access to select AI models hosted in Sweden that operate on 100% renewable energy. While not all available models meet this specific sustainability standard, we empower our community to make environmentally conscious choices by highlighting these carbon-neutral options.

Read more about the Microsoft commitment to net zero energy production in Sweden here.

The How: Match the Model to the Task

Not all AI tasks require the same level of computational power. Using a large language model like GPT-5 for a simple task, such as summarising an email, is like driving a lorry to the corner shop. It’s not required, it will take you ages to park and its not the right tool for the job. You need something smaller.

- Use smaller models for simpler tasks: For tasks like grammar correction, summarising emails, or creating presentations, use smaller models like GPT-mini, Claude Sonnet, or Llama. Save the larger models for complex tasks like research or multi-step problem-solving.

- Be concise with your prompts: Every word or punctuation mark the AI processes (called a “token”) consumes energy. Writing clear, specific prompts not only saves energy but also improves the quality of the AI’s response. Avoid generating long, unnecessary outputs that you won’t read.

What We Can Do as a University

As an educational institution, we have a responsibility to lead by example. Just as we’re developing sustainability guidelines for travel, food, and energy consumption, we need to integrate AI into our sustainability strategy. This includes:

- Providing access to sustainable AI platforms: Our new private AI platform will leverage Microsoft’s renewable-powered Sweden data centres.

- Educating users: We need to develop resources to help staff and students choose the right AI model for the right task and reduce token waste.

- Advocating for transparency: We need to work with AI providers to ensure they adopt sustainable practices in training, hardware manufacturing, and operations, like we do with all our other providers.

View the full details from the image above here.

The UK Picture: AI and Sustainability

It’s important to acknowledge the reality of AI’s environmental impact. The development of large-scale AI models is inherently resource-intensive, and the UK is not a global leader in AI infrastructure. As a country, we have limited influence over the carbon-intensive practices of major tech companies.

However, just as we’ve made sustainable choices in other areas of our lives, such as recycling, buying electric cars, and reducing home energy use, we can do the same with AI. By making conscious choices about where and how we use AI, we can reduce its environmental impact and push for a more sustainable future for this technology.

Continue to be a conscious consumer

We increasingly ask where our food comes from and how our travel affects the planet. It is time we ask the same of our AI. The technology is here to stay, yet its ecological path is not yet fixed. The key to sustainable AI lies in intentional usage: selecting green-powered providers, matching model scale to the specific task, practicing prompt writing efficiency, and even sometimes choosing not to use AI at all! Together, these choices transform AI from a carbon burden into a responsible tool for progress.

We might be just one university, but collectively, we can have a significant impact on how AI is used and how sustainable it becomes.

A note from the author of the article

I’ve always found writing my ideas down to be a challenge, and AI has become an invaluable tool in helping me express them more effectively. While the spelling, grammar, and structure of this article were refined with AI’s support, the core ideas, themes, and commentary were entirely my own, with reference to the sources below.

Moreover, the AI technology I used to craft this piece operates more sustainably than most other AI platforms, running on 100% renewable energy sources in the Azure Sweden Central data centre. This reflects our commitment in IT Innovation to leveraging AI technology responsibly and as sustainably as possible.

Further reading

| Topic | Source | Key Data |

| Training Energy Use | Strubell, E. et al. (2019). Energy and Policy Considerations for NLP. arXiv | Training GPT-3 emitted 552 metric tonnes of CO₂. |

| Data Centre Energy Use | Jones, N. (2018). How to stop data centres gobbling electricity. Nature | Data centres use ~1% of global electricity. |

| Water Use | Google Environmental Report (2021). Google Sustainability | A Google data centre in Oregon used 274M gallons of water in 2021. |

| Carbon Emissions | Microsoft Sustainability Report (2023). Microsoft | Sweden data centre emits 0.02 metric tonnes CO₂/MWh vs. 0.85 for coal-powered centres. |

| Inference Energy Use | Patterson, D. et al. (2021). Carbon Emissions and Neural Networks. arXiv | Inference tasks consume energy comparable to boiling a kettle. |

| Efficiency of Small Models | OpenAI Blog (2023). Scaling Laws for Neural Language Models. OpenAI | Smaller models use significantly less energy for simple tasks. |

| Renewable AI Infrastructure | Microsoft News Centre (2023). Sustainability in the Cloud. Microsoft Microsoft commitment to net zero energy production in Sweden. | Microsoft’s Sweden data centre is 100% renewable, reducing AI carbon footprints by 98%. |

| AI Energy Trends | European Commission (2020). Energy-Efficient Cloud Computing. EU Digital Strategy | AI and cloud computing are a growing share of global electricity demand. |