The Case for University GenAI API Access

If you’ve followed our previous articles, you’ll notice we spend a significant amount of time building non-chat-based Generative AI web applications. Our portfolio includes tools for assessment marking, survey analytics, and several others currently under wraps.

To bring these applications to life, we require robust, reliable access to GenAI APIs. By building bespoke web interfaces around these APIs, we allow non-technical users to drive complex business processes. We call this “programmatic prompt engineering.” Essentially, we design interfaces that construct sophisticated prompts — tasks a human would likely never have the patience or technical overhead to type into a chat box manually.

The Engine Under the Hood: Azure AI Foundry

To access models like GPT-5 and Llama, our team utilises Azure AI Foundry. This Platform-as-a-Service (PaaS) allows us to privately provision Large Language Models (LLMs) and access them via RESTful APIs.

By leveraging Foundry, we ensure that security and privacy are baked in. All data passed through these services is protected under our University enterprise agreement. This gives us the freedom to focus on creating value for our users without the “data anxiety” of wondering if our intellectual property is being used to train public models.

A Growing Demand for Programmatic Access

While 99% of users are currently focused on chat-based interfaces, we’ve seen a recent surge in requests for API access. Over the past 12 months, the appetite for building has grown across the board:

- Researchers requiring APIs to power large-scale data analysis.

- Educators looking to build immersive, custom teaching experiences.

- Software Teams seeking to integrate GenAI features into existing campus apps.

- Students requesting access for ambitious final-year projects.

Scaling Innovation by Enabling Others

As a small innovation team, it is impossible for us to develop every single use case requested. However, our mission isn’t just to build tools — it’s to enable others to innovate. Universities are unique ecosystems overflowing with brilliant ideas and technical capability. We had to ask ourselves: What if we could provide seamless, secure API access to the entire University community? What would they build if the barriers were removed?

Introducing the AI Gateway

One way of answering those questions is to expand access to Azure Foundry. However, doing so presents its own problems around cost, observability, and security.

Instead, we developed the AI Gateway. This is an API management layer that sits directly on top of our private Azure AI Foundry provisioning. It acts as the bridge between our powerful backend models and the innovators across our campus.

API Management and Security

We’ve designed the Gateway to be as flexible as it is secure. Here is how we manage this:

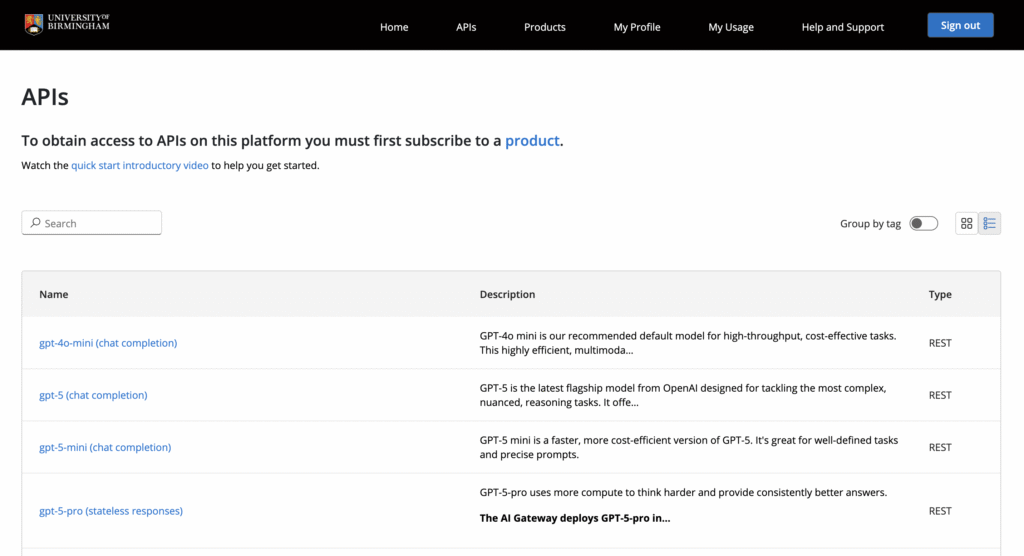

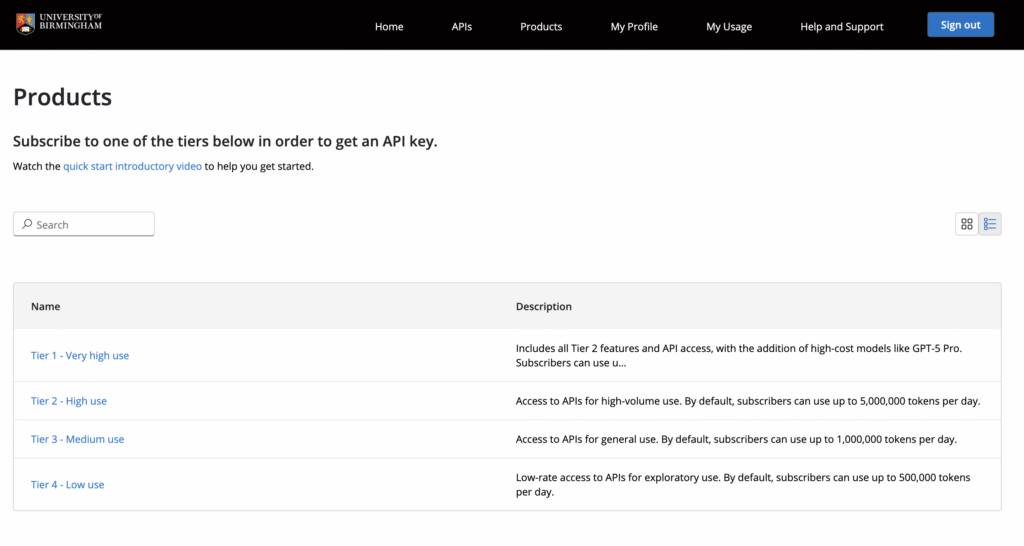

- Subscription-Based Access: Users subscribe to specific “products” tied to different models. These products come with tailored rate limits and specific model access, allowing us to manage costs and ensure equitable usage across the University.

- Approval-Driven Workflow: Access isn’t just a “free for all.” An integrated approval process ensures that users receive the specific level of access required for their individual use case, keeping our resource allocation intentional.

- Stateless by Design: All our APIs are currently stateless. This means the Gateway doesn’t “remember” previous interactions. Users are responsible for managing the state and logic within their own applications.

- Privacy First: By keeping the service stateless at this early stage, we avoid the complexity and risk of storing sensitive user data. We provide the intelligence; the users keep the control.

Early User Feedback

Early feedback on the AI gateway is promising. Matt Warwick, our Knowledge and Improvement Specialist, is currently building tools that will significantly enhance our service desk and support capabilities. Matt shared these thoughts on his experience with the platform:

Like a lot of teams experimenting with GenAI, we had early bits and pieces working nicely, but turning that into something secure, scalable and actually usable inside an enterprise platform like ServiceNow was a much bigger lift. Governance, privacy and reliable access to the right models were slowing us down. The AI Gateway changed all that for us. It centralises governance and gives us a single, secure interface to models like GPT-5 and GPT-5 mini, which made it much easier to bring LLM capabilities into our ServiceNow AI assistant without all the usual headaches.

What has also been brilliant is that the gateway fits into our development workflow, not just our production environment. We have been using it with VS Code and the Kilo Code add-on for LLM-powered code generation, which has genuinely helped the team move faster. The whole setup has allowed us to make real progress while still keeping security and platform teams happy. It has been a proper enabler for getting AI into production rather than leaving it sat in prototype land.

The AI Gateway Pilot

Innovation is as much about listening as it is about building. We aren’t afraid to pivot if a platform doesn’t resonate with our community, but to truly test the waters, we need user input. So we’re officially launching the AI Gateway Pilot to see if there’s a genuine appetite for this technology.

We are inviting a group of colleagues from Research, Education, and Professional Services to join us in this initial phase. By opening the doors to a small group of early adopters, we hope to see exactly what happens when colleagues stop being just “users” of AI and start building things with it.